In an ever changing IT landscape one has to keep up with the latest technology. That in itself is too much, so one has to decide what fits best personally as well as is demanded by the market. Some years ago already ‘everything will be containers’ was shouted everywhere. This concept I believed in. I had the chance to do something with Docker. Kubernetes, I only heard of it. I started reading about it, but got no clue. Too many concepts that did not fit in my brains. Yet. Note that I learn by doing; I cannot concentrate very well on reading.

By now I feel comfortable with Docker. I can build my own container and understand most of the concepts. Guru? In the land of the blind, the one-eyed man is king. So yes, where I work I am King Guru. But this self-appointed guru must march on, so I decided to certify as a Certified Kubernetes Administrator. Not only because the market demands for it, but also to challenge myself. And as an extra challenge, I will document how I build my lab. Then I will be King Guru with spectacles; one spectacle that is.

I must admit I have a terrible aversion regarding certification. It does not mean much to me. The fact that you have a certificate does not mean you master the job. It only means that you had strong nerves and knowledge A on moment B. Many tasks you do as an admin, you only do once or twice a year. And even then, this is often automated by Ansible. After 2 or 3 years you have to recertify. Why? Commerce! It would have been better if one has to recertify for your driver-license every five years. Anyway, CKA, a challenge it will be.

My infrastructure

The infrastructure I run is pretty simple. I have a 6 year old PC (i7, 16gb) as a server with Centos and a recent Intel Nuc (i5, 32Gb) on Debian Buster. Both run libvirt KVM on which the VM’s will run for Kubernetes. All VM’s will run Centos 7 on 2gb memory and 2 vCPU. The VM’s are pxe-booted and installed using a kickstartfile. The VM’s get a fixed IP from me but if you use dnsmasq you should be fine as well. Everything is on the same network. Nothing fancy, just a lab environment to get passed my exam. I have chosen to run 9 nodes (kub0 to kub8) but 3 nodes would have been enough. But since I have an Ansible script to configure the nodes, 3 or 9 or 27 nodes makes no difference. Besides, as a one-eyed King I want a big realm! So 9 nodes…

To configure the nodes, there is outstanding documentation on https://kubernetes.io/docs/home/ . But there is a downside in this. Kubernetes is evolving fast, so by the time you read this, it has changed again. I found out by reading ‘on the internet’ that my VM’s need the following changes to the configuration to be able to act as a kubernetesnode. Perhaps you will be needing some extra changes.

Kub0 is used as an admin node (Control Plane). The rest are kubelets

The Configuration

The configuration on the VM’s need the following:

- Installation of the docker repository

- Installation of the Kubernetes repository

- Installation of kubeadm, docker, chrony, bash-completion and some additional packages as you like.

- Make sure that docker and kubelet are started on all hosts. Chroyd must be started as well since the kuberneteshosts need to be in sync in time.

- I don’t want a firewall, so I disable firewalld. May be not a good idea in a production environment.

- Kubernetes dislikes swap, so disable swap and remove the swap from /etc/fstab

- Create a daemon.json file

- Restart the docker daemon.

The daemon.json file I use contains the following:

{

"exec-opts": ["native.cgroupdriver=systemd"],

"log-driver": "json-file",

"log-opts": {

"max-size": "100m"

},

"storage-driver": "overlay2"

}

This is because Centos 7 uses cgroups owned by systemd. Before, docker had its own cgroupfs-driver. If you do not change it, docker fails to work. If you want te read more about it: https://github.com/kubernetes/kubeadm/issues/1394

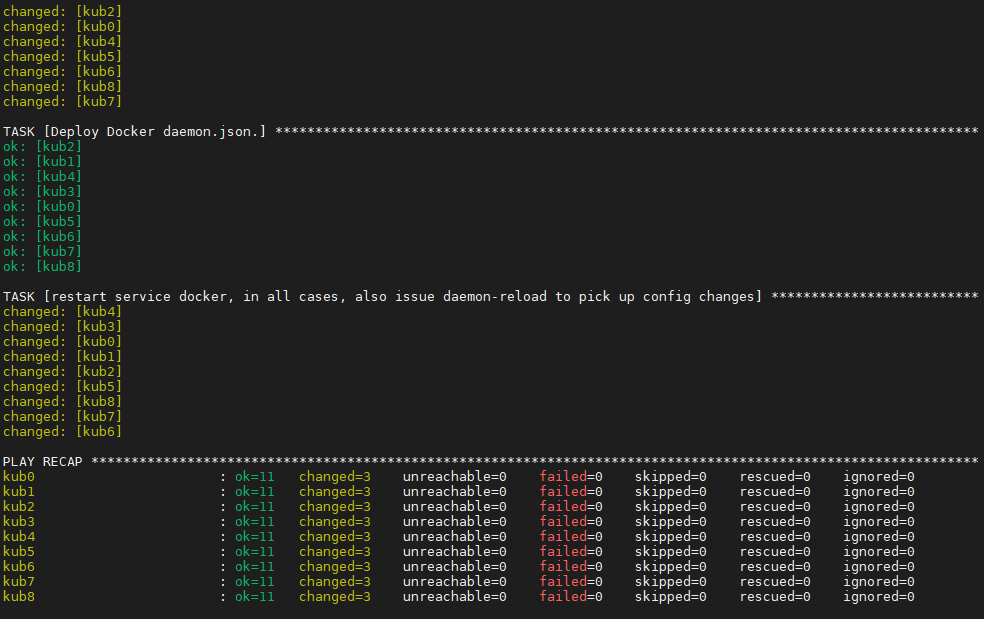

If you only have about three hosts to configure, your left and right hand will do the job. But a fourth host gets boring. A nineth a pita. So, automate it. In my Kingdom, I am not only the guru on K8S, but also on Ansible. So I wrote this awesome script that configures all hosts for me. It is awesome because it works for me. I am pretty sure that in other kingdoms a better script could have been made. There absolutely no logic in this script but only a serial execution of actions.

---

- hosts: kubernetes

remote_user: root

tasks:

- name: Add repositories for Docker

yum_repository:

name: docker

description: Official Docker repository

baseurl: https://download.docker.com/linux/centos/7/$basearch/stable

gpgcheck: yes

gpgkey: https://download.docker.com/linux/centos/gpg

- name: Add repositories for Kubernetes

yum_repository:

name: Kubernetes

description: Official Kubernetes repository

baseurl: https://packages.cloud.google.com/yum/repos/kubernetes-el7-x86_64

gpgcheck: yes

gpgkey: https://packages.cloud.google.com/yum/doc/yum-key.gpg

https://packages.cloud.google.com/yum/doc/rpm-package-key.gpg

- name: Check if yum-utils and bash-completion are installed

yum:

name:

- firewalld

- bash-completion

- yum-utils

- docker-ce-18.06.3.ce-3.el7.x86_64

- chrony

- kubeadm

- nfs-utils

- name: Update to latest level

yum:

name=* state=latest

- name: Make sure services are enabled/started

service:

name: "{{ item }}"

enabled: yes

state: started

with_items:

- docker

- kubelet

- chronyd

- name: Make sure services are disabled/started

service:

name: "{{ item }}"

enabled: no

state: stopped

with_items:

- firewalld

- name: Disable SWAP since kubernetes can't work with swap enabled (1/2)

shell: |

swapoff -a

# when: kubernetes_installed is changed

- name: Disable SWAP in fstab since kubernetes can't work with swap enabled (2/2)

replace:

path: /etc/fstab

regexp: '^(.*?\sswap\s+swap.*)$'

replace: '# \1'

# when: kubernetes_installed is changed

- name: Deploy Docker daemon.json.

copy:

src: files/daemon.json

dest: /etc/docker/daemon.json

- name: restart service docker, in all cases, also issue daemon-reload to pick up config changes

systemd:

state: restarted

daemon_reload: yes

name: docker

But it works!

Now I am ready to start configuring Kubernetes.